Readers,

I recommend a recent Foreign Affairs article by Ian Bremmer (President of Eurasia Group - disclosure, my old company) and Mustafa Suleyman (CEO of Inflection AI and co-founder of DeepMind).

I recommend it because I disagree with a lot of it and want to explain why.

I, of course, have great respect for Ian (and Mustafa!). He’s a genius and an inspiration to people like me. But, his analysis has flaws. If nothing else, I hope pointing them out can add to the debate and the thinking.

Here goes!

George

(@coemannn)

THE BIG TAKE

A rebuttal to Ian Bremmer & Mustafa Suleyman on AI policy

Ian Bremmer and Mustafa Suleyman co-wrote “The AI Power Paradox”, released last week in Foreign Affairs. To avoid misrepresenting what is a long and nuanced article, I’ll summarize the key point by restating the most specific part of the central recommendation:

Policymakers should create at least three overlapping governance regimes: one for establishing facts and advising governments on the risks posed by AI, one for preventing an all-out arms race between them, and one for managing the disruptive forces of a technology unlike anything the world has seen.

This three-pronged framework is useful and hard to argue with. But the logic informing it, and the governance models then detailed by the authors are flawed.

In general, the analysis calls for upending how we think about governance in the face of AI and favors giving tech companies a larger seat at the table. My view? It’s way too early to be making such pronouncements — especially when this approach would bake in an assumption based on the last ten years of power dynamics (i.e., tech companies being more capable than governments) that is just starting to change.

To get more specific, here are four main rebuttals to assumptions in the analysis:

Their take: AI is unlike any other technology.

My take: It’s much too early to base bold governance changes on this assumption.Their take: AI can’t be governed like other technologies.

My take: Actually, using existing tech regulatory powers can and should be the focus right now.Their take: AI companies need more of a seat at the table.

My take: They have plenty already, and they don’t have some magic insight that warrants more.Their take: Global governance should be the central focus.

My take: This is all well and good, but impractical at best and a distraction at worst.

Here’s the breakdown:

Their take: AI is unlike any other technology.

My take: It’s much too early to base bold governance changes on this assumption

Let’s start here: “Generative AI is only the tip of the iceberg. Its arrival marks a Big Bang moment, the beginning of a world-changing technological revolution that will remake politics, economies, and societies.”

The whole article rests on this premise - that AI is fundamentally different from recent experiences we have with technological innovation.

This, in many ways, isn’t true, which is apparent right within the article: “Twitter and TikTok are powerful, but few think they could transform the global economy”. The noticeable flaw in this argument is that TikTok is powered by AI; its recommendation algorithm is its key innovation. You could argue generative AI is a step-change compared to recommendation algorithms. But if we’re talking about policy toward AI as a whole (which would be odd if we weren’t), then we need to consider all of its strands rather than dismiss older products that are themselves AI-powered as irrelevant to the AI policy discussion.

There have been some significant over-promises about some technology trends in recent years. I happen to personally think that AI is different. But, it is “drinking the Kool-Aid” to state with certainty now that this is a unique situation.

Even if AI lives up to the hype, the urgency demanded to create a unique governance structure is belied by the assumed 10+ year outlook (their scenario talks about 2035): plenty of time for more to be learned before deciding we need to “rethink basic assumptions about the geopolitical order” and bake this rethink into permanent governance structures.

Their take: AI can’t be governed like other technologies.

My take: Actually, existing tech regulatory powers can and should be the focus right now.

After making the point that this is an extraordinary situation, the analysis inevitably demands extraordinary rules. As the article states: “AI cannot be governed like any previous technology”.

Not only can you use many existing rules, this should actually be the focus at right now.

It took regulators years to fully break down and understand the value chain of digital platforms to effectively regulate them with detailed and ambitious rules like the EU’s DMA. Because AI sits atop a range of parts of the tech value chain that are already being regulated, this type of value chain regulatory breakdown is already possible through existing means for AI.

The key data inputs are regulated already by rules governing how tech companies (including AI companies) can collect and use data (e.g., privacy/data protection regulations) and use it fairly (e.g., copyright rules). Much of the processing is covered by various recent algorithm rules (e.g., the EU’s recent DSA) and fairness rules built into consumer protection. The outputs produced are then regulated through consumer protection rules and content regulations (e.g., liability rules, transparency rules, etc.). The market structure is regulated by traditional competition principles both in its models and its infrastructure (cloud/chips).

AI creates unique issues, but regulators are already grappling with how they fit into existing regulatory powers. In competition, for example, the unique AI issue of algorithmic collusion is a challenge, but the Commissioner of the FTC has written academic papers about this for years already, and the FTC and DOJ likely only need existing abuse of dominance-type powers to intervene if they find evidence of this. Similarly, consumer protection regulators don’t need new powers to tackle new forms of unfairness they find (see the Norwegian regulator’s view on this here as an example).

The article then somewhat dismisses explicit AI legislation as “inadequate”. Ultimately, I agree it is inadequate, but this is largely because it is too early to do much more than set some basic rules of the road, ban high-risk use cases, and draw out some compliance measures, as the forthcoming EU AI Act already does.

The article states AI regulation is in its “infancy”, but the technology is, too, and stating that it will evolve so quickly we won’t be able to adapt — even as much of the value chain of AI is already covered by existing powers — is admitting defeat before you even know who you’re fighting.

Their take: AI companies need more of a seat at the table.

My take: They have plenty and don’t have some magic insight that warrants more.

The recommended governance structure is one in which AI companies sit at the table. “If global governance of AI is to become possible, the international system must move past traditional conceptions of sovereignty and welcome technology companies to the table.” In fairness, there are later explanations that this doesn’t override the need for governments to make the final, democratically accountable decisions and that civil society and the larger policy ecosystem are included.

But there are two assumptions in the idea that AI companies should be given an elevated role beyond what will be, and already is, a significant role (they were invited to the White House to announce self-regulatory principles!).

Firstly, it assumes AI companies have some secret knowledge inaccessible to the rest of us or the government. The reality is that they, too, are just speculating about AI’s impact. Often, they don’t even understand why their own AI does some things it does. Many of the most prominent AI leaders are even quite open about this.

Secondly, it assumes a very common perception that tech companies are the masters of the universe while the government is hapless about technology. This is a very 2010s view. The article quotes Ted Cruz saying Congress “doesn’t know what the hell it’s doing”. To be fair, Congressional hearings on tech make this palpably apparent for the Senators in particular. But there are actually some very up-to-speed elected politicians. And more than that, some staff with serious tech policy and AI expertise. And that’s just the political side. The FTC, DOJ, NIST, and other agencies are stacked with tech expertise by now. The EU, UK, India, Singapore, and Australia all have very capable agencies, to name just a few. Civil society has incredible expertise in informing policy discussion, including many people who helped develop AI technology themselves.

Companies already have a seat at the table. How would we bake in even more participation, and for what reason? Which companies should be included? Just the foundation models? What about NVIDIA? How is the open-source community included? I have many questions. But mainly I question the underlying premise of “moving past traditional conceptions of sovereignty”.

Their take: Global governance should be the central focus.

My take: This is all well and good, but it’s impractical at best and a distraction at worst.

Stepping back, the implication of the article is that global governance is the issue most urgent and missing right now. The problem? It’s totally impractical. In the US, we can’t even get Federal privacy legislation passed. Internationally, the US is leading a breakdown of its free market principles on industrial policy, driving a wave of nationally self-interested innovation and investment policy at total odds with the idea of coordinated global governance for anything. And that’s amongst allies, let alone the continued decline in cooperation between the two world’s superpowers (the US is designing rules like chip restrictions specifically to hamstring Chinese AI - not quite the environment for global governance).

This leaves the option open for either smaller blocs (which would not reduce the AI arms race, and may even make it more acute) or new-but-toothless governance structures just there to guide a “precautionary” approach and unlikely to have the tools to enforce this. This would be all well and good, but its very nature makes it unable to match the size of the problem the article seeks to promote. Moreover, as the article notes, these kinds of things already exist in the form of forums like GPAI.

As I said at the start, this article’s central recommendation (global governance with three main priorities) is reasonable — even good. But this is far from the central focus of AI policy, and rightly so. The focus needs to stay on what new issues AI creates within existing policy and sector-level frameworks to enable quick and real enforcement and where specific gaps need to be closed as the technology evolves.

Altogether, if this article is designed to raise awareness of the size of the governance challenge in AI, I welcome it. But its underlying view — that the solution to the challenge is to upend governance principles now because we’re so certain of AI’s world-changing role and that this upending should be done in favor of more power for companies… and promoting the idea that the US and China should cooperate in the AI arms race… — deserved some push back.

As I said in my first edition on generative AI - everybody needs to calm down.

HMM, INTERESTING

Top 5 - The eye-catching reads

Big Tech, Concentrated Power, and the Political Economy of Open AI: This super interesting paper co-written by Meredith Whitaker (one of my tech policy rebels) explores how “open” is being used cynically by some companies to grow their influence over policy and to benefit from the messaging benefits without truly being “open”.

BEUC on personalized pricing: A position paper for the consumer group calling for a general prohibition on adjusting pricing based on personal data. The crackdown on behavioral ads is underway, and personalized pricing is a smaller but underrated new area of interest.

Mozilla Foundation on the carbon footprint of the internet: Some interesting stats looking at things like video calls, podcasts, streaming video, and of course, AI. Nicely also includes some tips (that align to my interests): turning off video on a Zoom reduces the carbon footprint of that call by 96%!

TikTok Shop’s logistics dilemma (paywalled): Tech in Asia highlights a challenge for TikTok: Should they match their Southeast Asian e-commerce peers by building fulfillment capabilities? It’d be very interesting if they did. Many social platforms have experimented and failed with shopping, but developing logistics (something the others have never done) would be a real statement of intent.

Do we have social credit now, too?: The social credit system was written up as dystopian when implemented in China. But, do we basically have this in the West anyway?

AND, FINALLY

My top charts of the week

Rolex index is crashing

A better signal of the tech labor recession than the NASDAQ

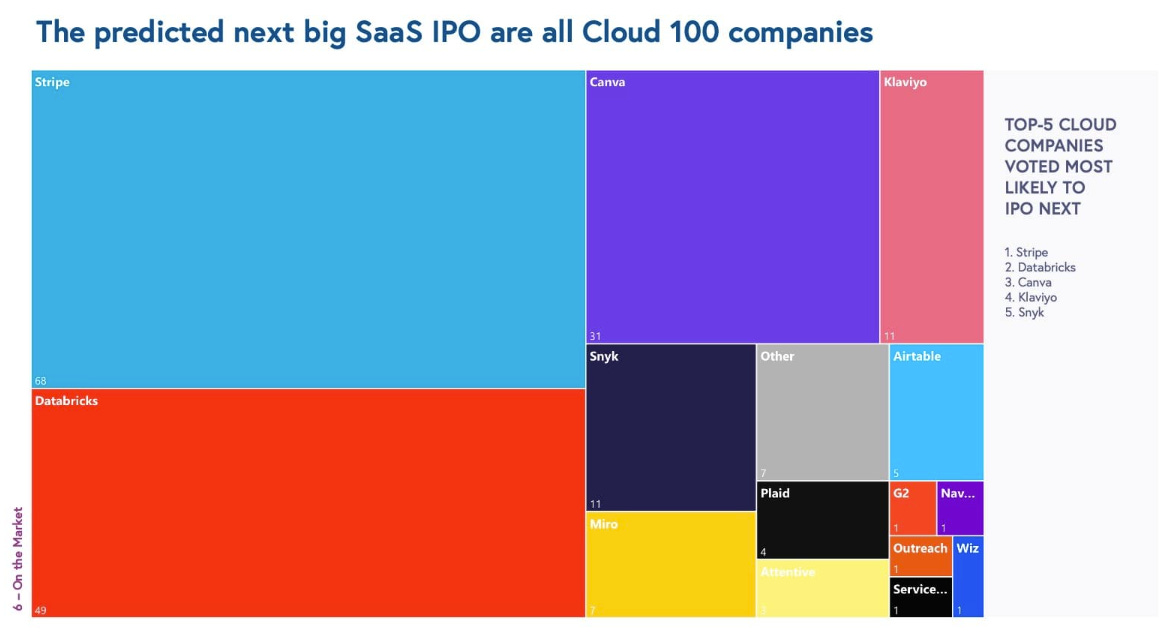

Cloud SaaS goes public

Predictions of the next big SaaS IPOs from this Bessemer report (with lots of other nice Cloud visuals)

Friday is WFH day

WFH is dropping across the board, but the week’s structure hurts my sensibilities as a consultant accustomed to only being in the office on Fridays!